Ambient.ai Raises $52M Led by a16z for AI-Enabled Security Camera Breach Alerts

ambient.ai ai 52mcoldeweytechcrunch

Security – as in “Hey you, you can’t go there” – quickly becomes a complicated, impossible task after passing a few buildings and cameras. Who can watch everywhere at once, and send someone in time to prevent a problem? Ambient.ai isn’t the first to claim that AI can, but they may be the first to really pull it off in a big way — and they’ve raised $52 million to keep growing.

The problem with today’s procedures is that no one can tell such a thing. If you have a modern company or school campus with dozens or hundreds of cameras, they produce so much footage and data that even a dedicated security team would have trouble keeping up with it. As a result, not only are they more likely to miss an important event, but they also end up in false alarms and noise reaching their ears.

Shikhar Shrestha, CEO and co-founder of Ambient.ai, told TechCrunch, “Victims always look to the cameras, hoping that someone is coming to their aid… but this is not the case.” “The best-case scenario is you wait for the event to happen, you go and snap the video, and you work from there. We have cameras, we have sensors, we have executives-in-the-middle brains.” There is a shortage.”

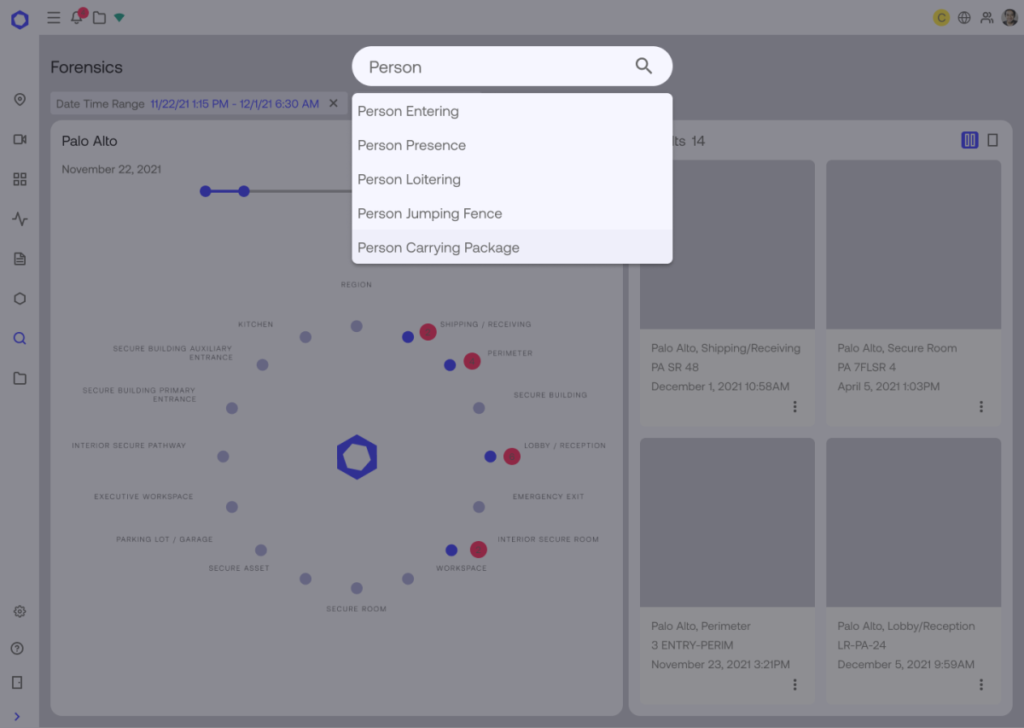

Clearly, Shrestha’s company is looking to provide the brain: a central visual processing unit for live security footage that can tell when something is going wrong and immediately tell the right people. But without the bias that threatens such efforts, and there is no facial recognition.

Others have gotten into this particular idea before now, but so far no one has taken it seriously. The first generation of automatic image recognition, Shrestha said, had simple motion detection, little more than checking whether pixels were moving across the screen—with no insight into whether it was a tree or a home invader. Next came the use of deep learning to identify objects: gun in hand or identifying a breaking window. This proved useful but limited and somewhat high-maintenance, requiring a lot of scene- and object-specific training.

“The insight was, if you look at what humans do to understand a video, we take in a lot of other information: Is the person sitting or standing? Are they opening the door, walking or running. Are they? Are they indoors or outdoors, day time or night time? We bring all that together to create a kind of comprehensive understanding of the scene,” explained Shrestha. Use computer vision intelligence to mine the footage for the series. We break down every function and call it a primitive: interactions, objects, etc., then we combine those building blocks to form a single ‘signature’.

A signature can be something like “a person who sits in their car for long periods of time at night,” or “a person standing near a security checkpoint not conversing with anyone,” or a number of things. Some have been tweaked and added by the team, some arrived at independently by the model, which Shrestha described as “a managed semi-supervised approach”.

The advantage of using AI to monitor a hundred video streams at once is obvious, even if you consider that AI is only 80% of what it is to be as good a human being when something bad happens. With no drawbacks like distraction, fatigue or only having two eyes, the AI can enforce that level of success without limits on time or feed number, meaning the chances of success are actually quite high.

But the same could be said of a proto-AI system a few years ago that was only looking for guns. Ambient.ai aims for something a little more broad.

“We built the platform around the idea of privacy by design,” Shrestha said. With AI-powered security, “people assume that facial recognition is a part of it, but with our approach you have a large number of signature programs, and you can have risk indicators without facial recognition. You have There’s not just an image and a model that explains what’s going on — we have all these different blocks that allow you to get more descriptive in the system.”

Essentially this is done by keeping each individual bias free to undertake the recognized activity. For example, whether someone is sitting or standing, or how long they have been waiting outside a door – if each of these behaviors can be audited and found across demographics and groups, such estimates The sum must also be free of bias. In this way the system structurally minimizes bias.

However, it must be said that prejudice is insidious and complex, and our ability to recognize and reduce it is lagging behind the state of the art. Yet it seems intuitively true, as Shrestha said, “If you don’t have an approximation category for something that can be biased, there’s no way for that bias to come about.” I hope!

| Homepage | Click Hear |